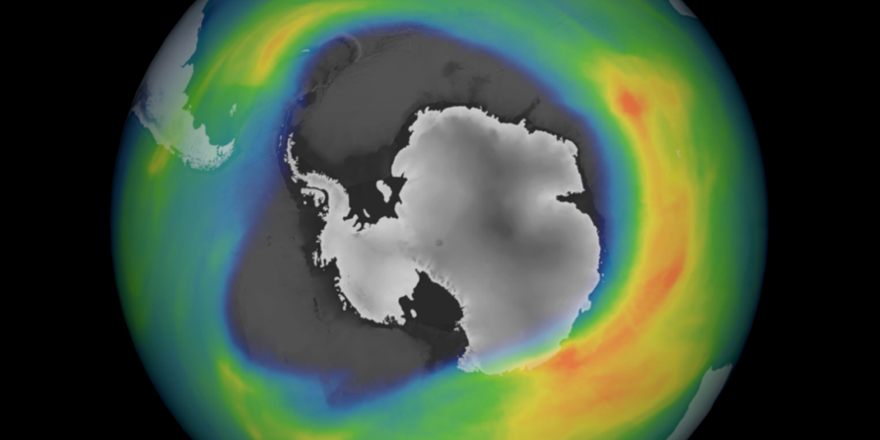

Ozone depletion area over Antarctica, 2020. Image taken by instruments aboard the

Copernicus Sentinel-5P satellite by the European Space Agency.

It is the theory that determines what we see.

– Albert Einstein

In the early 1980s, an area of very low ozone (an ozone ‘hole’) was discovered over Antarctica. What was especially surprising was that it had been growing, and was visible to the right systems for quite a while, without being reported. Why? The monitoring system’s design.

It turned out that the systems for detecting ozone concentration were programmed to reject very low readings (below 180 Dobson units) as a quality control measure, since there had never been a reading below 200.

Unfortunately, the data for October 1983 contained many, many such readings – all of which were automatically discarded. A hole in the data hid the hole in the ozone layer.

Luckily, two things prevented the hole from going undiscovered forever:

- First, the system was set to notify people when a reading was discarded, so that someone could manually look into it and figure out why it had happened. (In this case, people did so)

- Second, a group of researchers from the British Antarctic Survey analyzed the data independently and brought up the hole in a now-famous letter to the journal Nature in 1985

Surveillance balloon over Billings, Montana, Feb 1, 2023. Photo by Chase Doak.

Source: Wikipedia (CC4.0)

Almost 40 years later, a similar situation unfolded when a Chinese spy balloon was spotted over (and later shot down over) the United States. Following the discovery, US air defenses were recalibrated to stop ignoring objects that were flying slowly and emitted little or no heat – and when that was done, they immediately discovered several similar objects.

Systems limitations had made it possible for a large balloon, one was easily visible to radar and tens of thousands of feet up, to metaphorically “fly under the radar.” It hadn’t flown under the radar, but it had been beneath notice.

Sustainability Holes

These examples are replicated many-fold when it comes to environmental and social issues. In business, precious few companies are truly ready when a big environmental or social concern rears its head – for example, in spite of the availability of pandemic insurance before COVID (but after Swine Flu, Bird Flu, SARS, MERS, etc.), only a single company actually purchased it.

When you look…through rose-colored glasses, all the red flags just look like flags.

– Bojack Horseman (by Raphael Bob-Waksberg)

It’s not just COVID, either. Consider a different thinking hole: climate risk. For years, I’ve been making three mutually-reinforcing points to financially-oriented audiences:

- Companies are far more vulnerable to climate disruptions than they think (because of submerged risks, such as that their suppliers, the ports they use, their customers, or others are affected)

- Companies purchase very little formal insurance against many of these risks*

- Since they don’t have formal insurance against these risks, they are by definition self-insuring. But they don’t think of it that way, and therefore they’re unintentionally self-insured, meaning that they’re not taking the risk-reducing actions they should

- Companies may have natural disaster insurance, but they’re extremely unlikely to have any sort of coverage for a loss of revenue caused by their customers experiencing a natural disaster, or a war spiking the price of oil, or a pandemic causing the price of container shipping to skyrocket (e.g., from around $2,000 to over ten times that much)

Soyuz rocket launch over Russia. Photo by Statuska

Normally, the reaction from finance professionals and other executives is to say something like, “I see. That makes sense, but I hadn’t thought about it that way.”

Like the US air-defense system, these executives had all the tools (risk models, insurance and self-insurance best practices) needed to incorporate climate risk in their thinking in a more complete way, but their systems weren’t set up to focus on it.

Photo by Broesus / Unsplash

Plugging the Hole

When there is a hole in awareness, there is a hole in action. Luckily, the ozone hole was discovered, and international action to address the problem followed. And when US defenses were recalibrated, and found more balloons, they took action.

But companies still allow far too much risk to go unseen – and un-acted-on because their systems are set up wrong. They’re primarily set up to look at the yesterday’s risks, along with those that are right in front of them.

But this is a solvable problem. Of course, you can tactically become more aware of climate disruptions and pandemic risks and do more to prevent and prepare for them. That’s a good start.

Photo by Alev Takil / Unsplash

Going beyond responding to a few specific issues – developing the capability to see more clearly – is even better. One way to do this is to let your values guide what you see, becoming “values lenses.”

There’s more about this* in my book The Value of Values, including what kinds of values and how to turn them into better lenses, but here’s a start:

When you let the desire to make the world a better place guide where you look (including where people are more vulnerable) and what you look at (e.g., social and environmental issues), you help close unintentional holes in your thinking. And you see much more clearly – and much farther.

* There’s more about values lenses, COVID insurance, and unintentional self-insurance in my book The Value of Values (which will be published by MIT Press in February 2024).